|

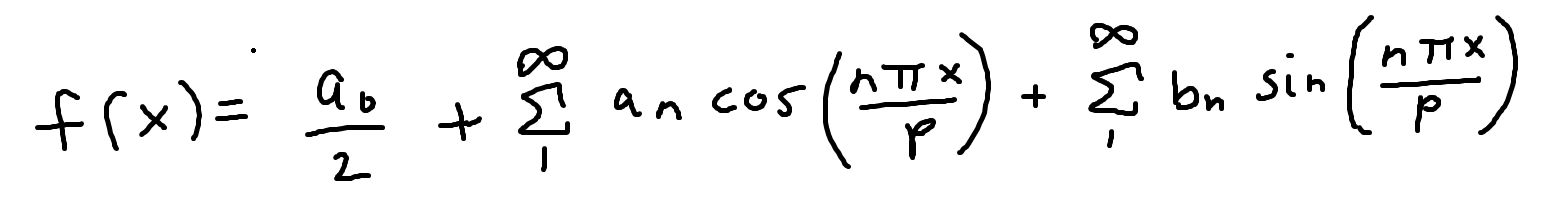

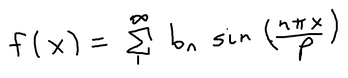

Fourier Series. This was the super annoying memorizing-the-formulas topic in my class, but it was actually one of the most interesting math concepts ever. So what is the Fourier series? What's the significance of it? For that we first have to understand what a periodic function is. A periodic function is a function where T > 0, and f(x+T) = f(x) for every value of x. The T is the period of f(x). An example of a periodic function is sin(x) and cos(x), which have a period of 2π. Fourier series is essentially a way to expand this periodic function to an infinite series involving a bunch of sines and cosines. So how do you derive the Fourier series of a periodic function? Let p > 0 and f(x) be a periodic function with period 2p, within the bounds of (-p, p). The Fourier series of f(x) is: where the a of n and a of 0 and b of n are Fourier coefficients ALSO, there are two things to keep in mind: assuming x is an integer,

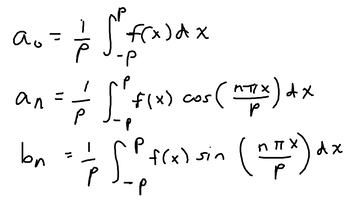

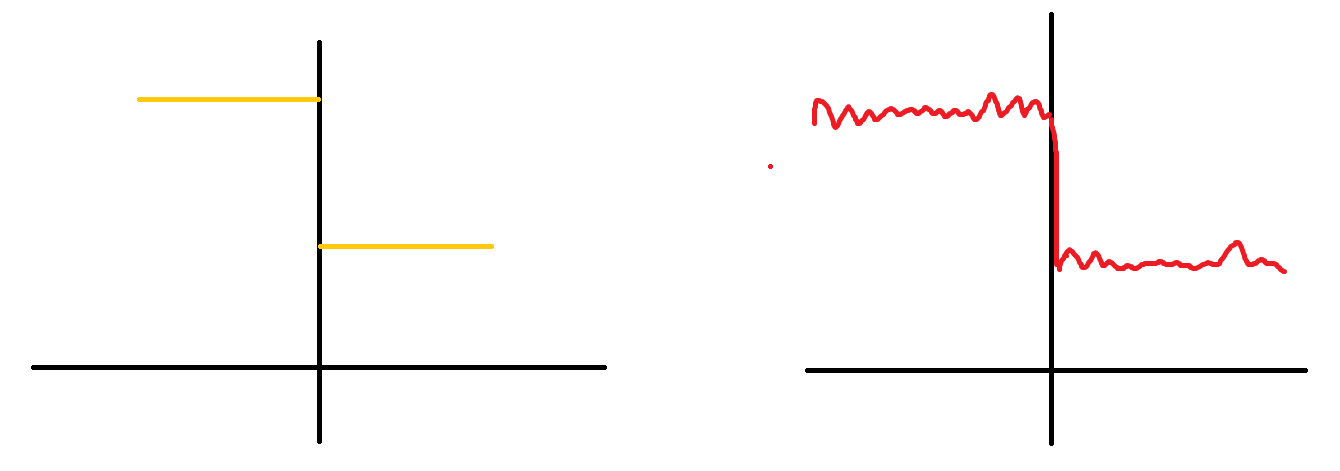

the left graph shows the original f(x) and the right graph shows Fourier series estimation graph. When you graph the certain number of n terms of the fourier series, you will get a close approximation. This series is very similar to Taylor series, except Fourier series also works with discontinuous functions as well. Fourier sine seriesYou can obtain the Fourier sine and cosine series from the general formula. For the Fourier Sine Series, we assume that f(x) is an odd function, which means that f(-x) = -f(x). If thats the case, then the a of 0 and a of n terms become zero because an odd function (f(x)) multiplied by an even function (cos(nπx)) = an odd function. An interval from -p to p over an odd function is 0. an example of an odd function over an interval (-p, p). both the areas cancel each other out, which evaluates the integral to 0. Since a of n and a of 0 are both equal to 0, the function evaluates to the general Fourier series with just the b of n Fourier coefficient. Fourier cosine seriesYou obtain the Fourier cosine series when you assume that f(x) is an even function; which means that f(-x) = f(x). Since f(x) is an even function, we know that the b of n term in the general Fourier series equation is 0 because an even function (f(x)) times an odd function (sin(nπx)) is equal to an odd function. The integral from (from -p to p) of an odd function is always zero since the areas cancel each other. Therefore, with only the a of n an a of 0 terms, the Fourier series becomes the Fourier cosine series (with only cosines).

0 Comments

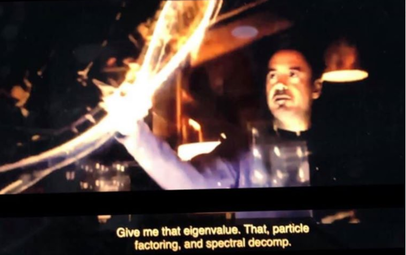

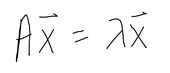

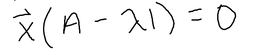

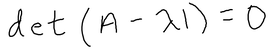

I never actually really understood the significance of eigenvalues and how it's applied visually until my Diff Equations class was over, which is not the best time to figure it out, but at least it'll help me for my future classes, lol. And, its actually pretty cool too!! Honestly, I think the time when I got super interested in learning about why these eigenvalues existed was when this scene came up in the Avengers Endgame. When Tony Stark mentioned the word "eigenvalue" I was like, "OMG, OMG I actually kinda know this...kinda...that word is very familiar to me so it counts." LOL, yup, this is when I was like hmm I have to actually understand an eigenvalue's significance and application. So wow, that happened and I never would have expected an Avengers movie to squeeze in a quick lesson in Diff Equations and Linear Algebra. Anyways, getting back to the point, what is an eigenvalue? And what are these used for? For example, when you solve for an IVP (initial value problem) with matrices, you solve for the eigenvalues first by finding the determinant, and then you solve for the corresponding eigenvectors (from the eigenvalues), and then plug in the initial value equation to the general solution to find the value of the constants. That sounds terrible, but it's actually not too bad. It's basically just solving for linear equations except in a matrix you have to find the determinant. That's one way of using eigenvalues. But what are they? and what's their real world application? Eigenvalues and their corresponding eigenvectors summarize matrix data. Eigenvectors are vectors whose direction is not changed when some linear transformation is applied to the vector. For instance, The red vector is an eigenvector since it never changes, even after a linear transformation has been applied. These vectors define the matrix of the transformation (scaling). For any matrix A (n x n square matrix), x, (a n x 1 vector) is an eigenvector of this matrix if the product of Ax is proportional to the product of x * eigenvalue. where x is the eigenvector, A is the matrix, and the lambda is the eigenvalue. When you solve for lambda using this formula, you will end up with: For this to be equal to 0, A has to equal lamda times I, where I is an identity matrix with the same dimensions as a, and lamda (eigenvalue) is just a scale factor. However, we are assuming that x is not a null vector, which means to satisfy the equation, A - lambda * I can't have an inverse. A matrix that is non-invertible has a determinant of 0. Therefore, we can conclude that, And we just use this equation to solve for eigenvalues of any n x n matrix.

Eigenvalues and eigenvectors of a certain matrix have tons of real world applications such as image compression, clustering in data science, predictions and page rank algorithms. |

Archives

May 2021

Topics

All

|

RSS Feed

RSS Feed